Measure what is measurable, and make measurable what is not so.

~ Galileo Galilei

i have sat through more versions of the same meeting in the past couple of years than i care to admit. It always opens with a slide, sometimes a graph, and nearly always the same sentence spoken with the confidence of a human (for now) who has recently discovered fire:

AI Coding is making our engineers a buh-zillion times more productive.

~ erryone errywhere

Mehbeh. Or Mehbeh Not. This is the part nobody wants to say out loud. Just like workers who act like they don’t prompt errythang to you know where and back for literally erry-thang.

We just made it ten times easier to generate noise, ship half-finished thoughts, and call the resulting churn velocity. Look, everyone, we are agile! (“Hey where did my post it drop of the ah-gill-ee board….”)

i write this from the perspective of someone who has spent the better part of (ahem many) decades pushing bits around many types of systems, and the last several years staring directly into mission-critical workloads where a bad decision does not become a JIRA ticket or Github issue, it becomes a phone call at “Oh Dark-Thirty” local.

However, in the creation of mission-critical systems: Surgical. Defense. Logistics. Edge inference. Real-time orchestration across systems that do not forgive sloppy thinking. In those environments, you learn very quickly that productivity is not a V I B E. It is a measurable flow of high-quality decisions under constraint, and the minute you stop respecting the constraint, the system starts eating you.

AI-assisted coding did not repeal that law. It rewrote the constraint while you were grabbing another piece of pineapple ham pizza or doomscrolling.

The Unit of Work Has Changed, Quietly

Historically, the scarce resource in software was human attention. Cognitive load. Coordination overhead. The dreaded two-pizza meeting that somehow required four pizzas and resolved nothing, except everyone wondering who would take the last piece of pineapple ham. We measured productivity badly because we were measuring the wrong substrate lines of code, commits, deploys, proxies layered on top of proxies. But at least the constraint itself was stable. You had engineers. They had hours. Work flowed, or it didn’t.

Then “IT” arrived a little sooner than many of us had planned, because although WE always had hoped it would arrive, IT came in a different gift wrapping. Backpropagation was back in vogue, then came The Transformers and Deep Learning, then a pseudo-CLI where you typed a “prompt,” and then the floodgates opened: Claude Code arrived. Grok (nice model distilling there bros). Cursor (60B anyone?). A pile of agentic tooling that fundamentally changed what developers do all day. We used to spend time in deep thought, designing and thinking between compiles, but now, with the humans_still_in_the_loop, a very large fraction of the typing, scaffolding, and even architectural drafting happens inside The All Knowing Model.

Ah! Eureka! Sounds like liberation until you realize you have silently replaced one constraint with another.

This new constraint is tokens[1].

Tokens are not a fuzzy abstraction. They are a first-class engineering resource, sitting right next to our beloved CPU,GPU, memory, and network, with a dollar sign attached to each. If you run a serious org, you now have a line item that looks a lot like compute spend because that is exactly what it is. And for the first time in the history of software engineering, we can draw a clean line from idea through generation through acceptance through deployed capability with a real economic cost attached to each step. That is a gift. It is also a trap because if you do not instrument it, it will quietly devour your margin and your architecture.

Tokens are evolving into a unifying primitive across the AI stack. They function simultaneously as an economic unit, where every token is billable, forecastable, and optimizable; as a scheduling unit, mapping directly to GPU time slices through prefill and decode cycles, queueing behavior, and overall throughput; and as a cognitive unit, defining the boundary of what a model can see, reason over, and retain within its context window. That, however, is only the surface layer.

Underneath, something more fundamental is taking shape: tokens are becoming the abstraction layer that unifies currency, memory, and compute. As a currency, they represent the first truly granular pricing primitive for intelligence not measured per model or per request, but per unit of reasoning, effectively per “thought fragment.” As memory, they define bounded buffers of context, where anything outside the token window is effectively forgotten unless explicitly rehydrated through retrieval or summarization. And as compute, tokens directly drive system behavior: they determine prefill workloads, which are parallel and compute-bound, as well as decode dynamics, which are sequential and constrained by memory bandwidth and latency.

tokens = currency + memory + compute abstraction

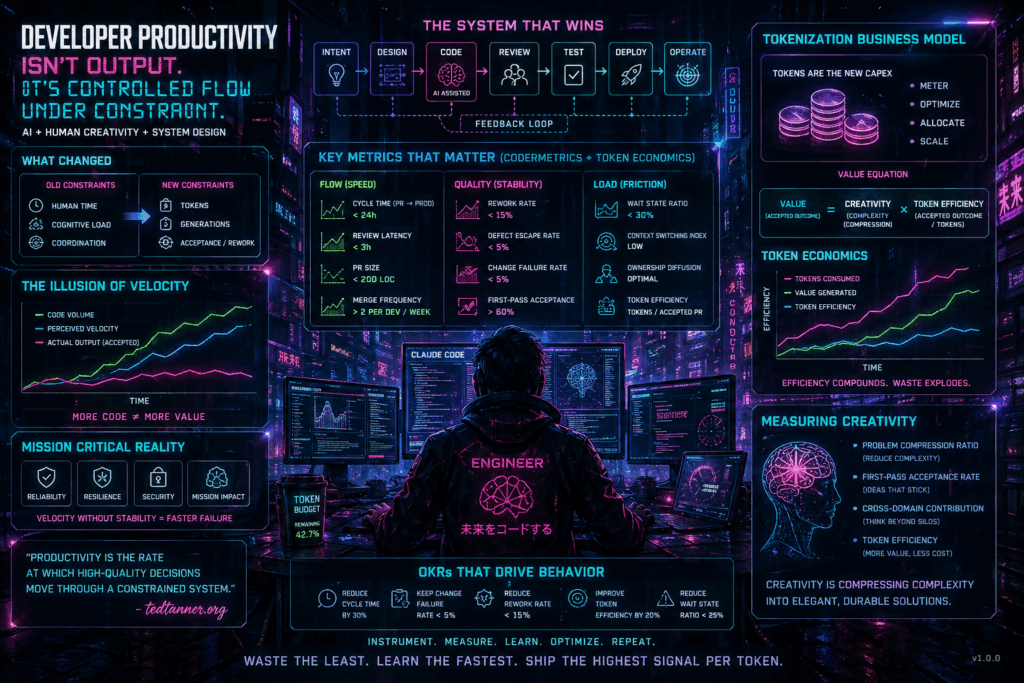

The Illusion Of Velocity

Here is what every team sees in the first ninety days after rolling out AI-assisted development or some-thang.

PR volume goes up (ah i do hope you are even tracking them please do so, Do At Least Some-Thang). Cycle time appears to drop. Engineers report feeling faster and to be fair, they are, in the same way that a cyclist going downhill is faster than one going uphill. Leadership sees the dashboard, nods sagely[2], and declares the transformation a success. Someone updates the dreaded disease: the slideware.

Then you look one layer down, and the picture changes.

Rework climbs acting a whole lot like refactoring to somewhere? Review latency balloons because humans are now the bottleneck in a pipeline that used to be bottlenecked by writing. Architectural drift accumulates in places nobody is watching, because generation is cheap and correction is not. You did not accelerate delivery. You increased the rate at which unfinished thoughts enter the system. In a toy app this is fine. In a system that has to hold under adversarial load, it is a slow-motion incident waiting to page you.

This is the part that matters for anyone running mission-critical platforms: the system does not care how many tokens you burned or how many PRs you opened. It cares whether the thing worked, whether it held under stress, and whether it reduced the uncertainty of the next decision. Everything else is theater.

Productivity Is Flow Under Constraint — Still

The one model that survives every generation of tooling, from punch cards to Claude Code, is this: developer productivity is the rate at which high-quality decisions flow through a constrained system. AI does not repeal that. It compresses one segment of the pipeline generation and in doing so, it exposes every other weakness you had been quietly tolerating. The queue that used to hide behind slow typing now stands out like a sore thumb. The ambiguous ownership, masked by low throughput, now creates explicit collisions. The review process you always meant to fix becomes the single largest source of wait state in the system.

Three failure modes appear almost immediately, in the same order every time.

The first is batch-size inflation dressed up as speed. Engineers, armed with a model that will happily generate a thousand lines in a minute, begin opening larger PRs. Larger PRs review slower, hide more defects, and carry more coordination tax. Cycle time goes down for the author and up for the team. Net throughput falls, but it falls later, so nobody connects the dots.

The second is rework explosion again, recursion at its finest, a fractal symphony of if-then-again. First drafts are cheap now. Correct systems are not. When you watch the seven-day rewrite rate on AI-generated code, you often see it creep past twenty-five percent before anyone raises a hand. That is not productivity. That is paid trash. You are converting tokens into heat. Joules down the drain. Mother nature doesn’t like that, you know.

The third, and the one that tends to surprise people, is wait-state dominance. Once writing is no longer the bottleneck, every other stage of the pipeline becomes visible reviews, CI, environment provisioning, release gates, ownership ambiguity and most organizations were never designed to operate with those segments under scrutiny. A third and fourth pair of “Cross-Eyed” Eyes On Glass. The model did its job. The system around it did not.

What You Actually Measure

I have argued for years, including in CEO OKRs → CTO Metrics, that the job of the CTO is to translate business outcomes into a set of instrumented signals that behave like a control system, not a quarterly report. That argument becomes more important, not less, the moment tokens enter the stack.

There are four layers worth discussing, and they nest.

Flow is still the backbone. Cycle time from PR to production, review latency, and merge frequency the standard DORA-adjacent surface. The twist is that flow is only meaningful when normalized against token consumption. If cycle time is dropping but tokens per accepted change are rising superlinearly, you are not getting more efficient. You are subsidizing the illusion of speed with computing.

Quality is where most AI-assisted teams quietly fail. The signal i care about most is not defect count. It is rework velocity how quickly generated code gets rewritten. Anything rewritten inside seventy-two hours of landing is, by definition, an unstable artifact. If that number climbs, your model is producing plausible code that the system rejects. Catch it early, or pay for it architecturally.

Load, meaning cognitive and system friction, is the hidden layer. Wait-state ratio time a unit of work spends idle, divided by total cycle time, tells you where your pipeline is actually broken. Context-switching index tells you whether engineers are still doing deep work or have been reduced to prompt-and-approve operators. Ownership diffusion tells you whether accountability has been silently distributed into the ether.

Creativity is the one everyone waves their hands at, because it is hard, and because most measurement frameworks collapse the moment you try to quantify it. I want to take that seriously for a moment, because I think the AI era is actually the first time we have had the instrumentation to do it honestly.

Measuring Creativity Without Killing It

Creativity is not output volume. It is not tokens generated. It is not a commit count. Those are the things that look like creativity from a distance and fall apart on contact.

Creativity, as best i can define it in an engineering context, is the compression of complexity into a durable, elegant, high-impact solution. You are taking a messy problem and returning something that is smaller, clearer, more general, and more stable than what you started with. That is the thing we actually pay senior “engineers and creatives” for. It is also the thing that models cannot yet do reliably on their own and the thing that, if measured badly, we will incentivize people to stop doing entirely.

You cannot measure creativity directly, but you can measure its footprint.

Problem compression ratio asks how much scope, code, or complexity disappeared between the initial specification and the shipped solution.

Good engineers delete more than they add. Great creatives reframe the problem so that most of it never needed to be built. Please, folks, understand WHY before you design. Re-wind. Re-Read.

It is only a small step to measuring “programmer productivity” in terms of “number of lines of code produced per month”. This is a very costly measuring unit because it encourages the writing of insipid code, but today I am less interested in how foolish a unit it is from even a pure business point of view. My point today is that, if we wish to count lines of code, we should not regard them as “lines produced” but as “lines spent”: the current conventional wisdom is so foolish as to book that count on the wrong side of the ledger.

~ Dijkstra, E. (1987)

First-pass acceptance rate for both humans and model-generated changes tells you how often a proposed solution lands without substantial rework. In an AI-assisted world this is a double signal: it tells you about the author’s judgment and about the quality of the context they are giving the model.

Cross-domain contribution (aka project mobility) captures engineers who solve problems outside their usual lane. This is the single best leading indicator of durable technical leadership i have ever tracked. It does not scale infinitely, but its absence is diagnostic.

Token efficiency: tokens consumed per accepted, shipped, non-reworked change is the new one, and it is the one I find most honest. Because it ties cognition to economics in real time. If a team’s token efficiency is improving quarter over quarter, they are getting genuinely better at converting machine cognition into durable capability. If it is flat while spending is rising, you are paying for activity, not value. Busy is as Busy does they say.

The Token Budget Is CapEx Now

Treat it that way. I mean that literally.

For the first time, we can tie engineering output to a continuously metered economic cost. Not a quarterly cloud bill. Not a headcount ratio. A real-time, per-change, per-feature, per-decision cost of cognition. That is a level of instrumentation that finance organizations have been begging for since the first mainframe. We should not squander it by hiding it inside a developer tools P&L line item and never looking at it again.

I have a dream with that one pull request or that feature designed by that amazing product person, we can map it directly to the valuation of the company.

~ tctjr

The conversation at the leadership level stops being how productive is this creative, a question that was always slightly degrading and almost always wrong, and becomes how efficiently is this system converting tokens into mission-ready capability. That is a question you can actually answer, and more importantly, one you can act on without reducing human beings to a throughput figure.

OKRs That Force The Right Behavior

If you run a mission-critical engineering organization and you are serious about this, vague objectives are worse than no objectives. Being told these objectives when you are the one creating, designing, and building is even worse. You want constraints that bend behavior in a specific direction. Below is roughly what i would write for a platform team shipping into a real-world, high-consequence environment adapt to taste.

The objective is to increase the deployment velocity of mission-critical capabilities without increasing system risk or compute cost per unit of delivered value. That is the whole thing. It is not clever and it is not supposed to be. Mission-critical systems reward extreme clarity.

Underneath that, i would set key results that operate as a connected system: reduce cycle time by thirty percent, hold change failure rate below five percent, drive rework rate under fifteen percent, improve token efficiency tokens per accepted PR by twenty percent, and collapse wait-state ratio below twenty-five percent. Each of those moves a different lever, and moving any one of them in isolation will surface the tension with the others, which is exactly what you want. An OKR set that cannot be gamed by optimizing a single axis is an OKR set that is actually doing its job.

At the operational layer, the KPIs you look at weekly (even daily?), not quarterly, you want a very short list on the wall or an agent to display it on all the screens in the company: cycle time, rework rate, wait-state ratio, token cost per shipped feature, and acceptance rate of AI-generated code. Five numbers. If you cannot tell the story of your engineering organization with five numbers updated weekly, you do not have a control system; you have a reporting habit. And in a mission-critical environment, drift is not a quarterly problem. Drift compounds in hours.

The Weekly Conversation Is The Real Artifact

i want to be careful here, because the point of all this instrumentation is not to build a more beautiful dashboard. It is to change the conversation.

The right weekly conversation, with the right five numbers on the wall, sounds like this. Why did rework tick up this week is it a specific surface, a specific author, a specific model context, or something structural? Where are tokens being wasted are we paying for retries, for bad prompts, for agents stuck in loops? Which stage of the pipeline is accumulating wait and is that stage bottlenecked by people, tooling, or ownership? Are we shipping decisions, or are we generating artifacts that look like decisions?

If those questions are not being asked weekly, by someone with the authority to actually change the system, the system will drift. Quietly at first. Then all at once, usually on a weekend.

The Pattern That Keeps Showing Up

After enough cycles through enough organizations, one pattern keeps winning. The best teams are not the ones moving fastest in any one step. They are the ones where less gets stuck, less gets rewritten, and less gets wasted. That has always been true. AI did not change it. AI just made the deltas larger, faster, and more expensive in both directions.

The winners of the next five years will not be the teams that generate the most code. They will be the teams that waste the least, learn the fastest, and convert intent into reality with the highest signal-per-token they can sustain. The losers will ship more code than ever before, pay more for it than ever before, and create less value doing it than they did in 2019.

Closing Thought

We are not entering an era of AI-driven development. That framing is lazy, and it offloads the thinking to a model that is not qualified to do the thinking. What we are actually entering is an era of token-constrained, system-optimized, human-plus-machine engineering, which is a mouthful, but it is the honest description.

In that world, the constraint is no longer time. It is not even worth attention. For now, it is tokens, attention, and system friction, measured together, optimized together, and treated as a single economic object.

If you get that right, you do not just improve developer productivity. You build an organization that can continuously convert ideas into reality faster, cheaper, and with far higher confidence than whoever you are competing against. If you get it wrong, you will ship more than ever and mean less than ever.

That, at the end of the day, is the difference.

Measure flow. Kill wait states. Shrink work units. Respect the token. Everything else is just more code.

Until Then,

#iwishyouwater <- The Wedge March 2026.

Be Safe.

Muzak To Blog By: Yamandú Costa, Vagner Cunha: Interpreta Concerto para Violão de 7 Cordas. This is a technically astounding piece of work. Amazing classical guitar. The recording is astounding.

Foonotes:

[1] If you made it down the stack of turtles this far, thank you for your time and attention. As a side note the word tokenization as it is used in the LLM parlance. The term is overloaded in several technology areas, including Token-Based Authentication (e.g., JWT): After a user logs in, the server issues an encrypted “token” (such as a JSON Web Token) that the client sends with subsequent requests. This avoids re-entering passwords. Security Tokens (Hardware): Physical devices (like USB keys, YubiKeys) that generate temporary codes (OTP) to prove a user possesses the device. Network Tokens (Payments): Card networks (Visa, Mastercard) replace sensitive Primary Account Numbers (PANs) with secure tokens to improve authorization rates and security. Blockchain (the word that shall not be said) and Web3: Tokens as Digital Assets In blockchain, a token is a programmable, digital asset that lives on a pre-existing blockchain (like Ethereum), using smart contracts to define its behavior.Coinhouse Governance Tokens: Give holders voting power to dictate the future of a protocol. Utility Tokens: Provide access to a specific product or service within a platform (e.g., a token to access a decentralized storage network), and the list goes on and on. A token in a Large Language Model (LLM) is the fundamental, discrete unit of data that a model processes. Rather than reading text word-by-word, an LLM breaks text into smaller chunks (stemming, lemmatization, etc.), subwords, characters, or punctuation, which are then mapped to unique numerical identifiers. The cool kids term for this (from a long time ago) are “Embeddings”: These integer IDs are subsequently converted into vectors known as embeddings ![]() dimensional space that captures semantic relationships. Right now, most of these models are, at best, a stochastic parrot (not to be confused with ParrotHeads from Jimmy Buffett), or as i view it, just major-league regex-ing at the core. So why call it a stochastic parrot, you ask? Thank you for prompting, Polly did want a cracker… We are transferring one language into another, and this is a very inefficient transfer function or an inefficient compression algorithm, just like computer languages. It only parrots what it is taught, with tokenization being a business model. My “hot take” is that the parrot (tokenization business model) will eventually die. However, that is another story, Mehbeh, for another time. If you really want to get into the details, math and code go here: Architecture Behind LLMs and Context Windows

dimensional space that captures semantic relationships. Right now, most of these models are, at best, a stochastic parrot (not to be confused with ParrotHeads from Jimmy Buffett), or as i view it, just major-league regex-ing at the core. So why call it a stochastic parrot, you ask? Thank you for prompting, Polly did want a cracker… We are transferring one language into another, and this is a very inefficient transfer function or an inefficient compression algorithm, just like computer languages. It only parrots what it is taught, with tokenization being a business model. My “hot take” is that the parrot (tokenization business model) will eventually die. However, that is another story, Mehbeh, for another time. If you really want to get into the details, math and code go here: Architecture Behind LLMs and Context Windows

[2]FWIW i hate pineapple ham pizza.

[3] i always wanted to nod sagely with a pipe, but I do not like smoking.

Excellent post!! Really appreciate the criticality, nuance, and insights here, and also it may be pedantic but breaking down references to tokens and the meaning within AI world for now is appreciated. Perspective.

LLM is not trained to produce mission outcomes. It’s trained to produce the most probable and “good” language outputs.

I’m curious how much will need to be shifted were LLM to change from natural language… and will the cost currently to make more trusted, reliable, or verified output from LLMs as it is be too much? (Architecture shift?? But where?)

Not to make light, but related YouTube short that might give a giggle :p : https://youtube.com/shorts/xwNP5UxsAkg?si=fc7QobzYyDqom6rs

Rachael:

Thank you so much for taking your time and reading this article. Also thank you even more for making some cogent commentary concerning the content, you are spot on with the statements. FWIW the song in the video is one of my progenies favortie songs. Random, NotRandom.

Be Safe!

//ted